Apple Christmas - A WWDC Breakdown

Friends, I have to confess something. If you didn't already know, I'm a huge Apple fan. I like to think I'm the type of fan that's willing to call them out when they do something dumb, but I also genuinely do just really like their products. Currently I'm running a 2016 MacBook Pro, a 2018 iPad Pro, and an iPhone XS. I'm still waiting to hear the official hardware announcements from Apple, but the Mac and iPhone are probably getting upgraded soon, just because the Mac is at the low end of the specs since I was in college when I bought it, and I'm a mobile app developer and a photographer so I want to get the latest iPhone with the newest camera.

So, not gonna lie, Monday was kind of a big day for me, because it was the beginning of Apples World Wide Developers Conference, starting out with the keynote event during which they announce some of their latest plans for the software and hardware. I look forward to every Apple event, to get an idea of what's coming.

If you want to know what Apple’s got coming up and my impression of it, keep reading! I believe I've listed everything from the event here, so if you don't want to spend two hours watching it or need a reference to review what went down, I've got you covered.

WWDC looks a bit different this year

WWDC traditionally has a live audience and in-person sessions following the keynote for developers to meet with Apple, but due to the pandemic it was moved entirely online this year. At the start of the keynote, as usual, the camera took us on to Apple’s campus and down the stairs into the Steve Jobs Theater, but on Monday all the seats were empty, and it was a bit eerie. I was curious to see how the lack of an audience would affect the presentation, since generally their reaction is part of the show, with some news bringing rounds of applause and other news bringing confusion, disappointment, or lack of interest. While we obviously missed out on the reaction of the audience, I do think Apple did a great job of distracting from the empty seats by choosing to locate the presenters in different locations throughout the campus.

A brief note about the state of the world

It seems hardly anything can happen anymore without addressing the crises of racism and COVID-19, and the Apple keynote was no exception. Tim Cook, Apple’s CEO, began the presentation by stating Apple’s commitment to diversity and thanking healthcare workers. You could call it political correctness, but sometimes political correctness is actually the correct thing to do.

Live or pre-recorded?

My original assumption was that the keynote would be given live, but the initial transition from Tim Cook to Craig suggested that the whole thing had been pre-recorded, and later transitions confirmed this theory, with Craig moving from one location to another extremely quickly, joking about it the first time. To keep our focus off the empty seats and take advantage of the online format, Apple chose to film the keynote in a variety of locations, which made it feel like we were getting a closer look at the action and showed off more of their beautiful and unique campus.

iOS

Getting into the actual content of the presentation, we’ve got the details about iOS 14 now, which has been a subject of speculation for several months. I was pretty excited to see what new features they would be offering, since I spend a lot of time on my phone, and I was not disappointed: an app library, widgets, picture in pictures; and improvements to Siri, Messages, Maps, CarPlay, and the App Store are all on the menu.

App Library

The App Library will be a new page on the home screen to improve the sorting of your applications, in order to make it easier to find what you’re looking for among pages of apps. I currently have five pages of apps on my phone, which makes it impossible to find things without looking around for a while or using the search. The App Library will automatically group apps into categories, with the ones you use most at the top for easy access and a list of suggested apps similar to what appears at the top of the search box now. You’ll be able to hide pages apps as well, to reduce clutter.

I'll have to wait until I have it in my hand for a while to say whether I really use this a lot, but my initial impression is that it will be very helpful.

Widgets

Widgets were a widely expected feature, although I heard some last minute speculation that they would not release widgets after all. But, Apple came through after all with the ability to resize the widgets that are currently in the "today view" and place them on the home screen with your apps. Obviously they've taken this idea from Android, which has done it for quite some time, although I do like the way Apple's flavor looks, and that all the apps are on the home screen as usual with the widgets added to that, rather than needing to add the apps manually to the home screen as in Android (at least Samsung's flavor, I can't speak for them all).

I know for a number of Android users the lack widgets on the home screen was a barrier of entry to iOS, so Apple has definitely taken a shot at that. I honestly don't know whether I'll use this or not, it'll depend on how it shakes out with the apps that I use, but I could see having the weather and my tasks on the home screen potentially being helpful.

One thing I am excited about is the automatic widget they slipped in at the end, which will show different things depending on the time of day and what it thinks you want to see. The Siri suggested apps work so well for me that I'm thinking this could be a really nice way to get multiple functions into the same space.

Picture-in-picture videos. Finally.

In iOS 14, a video you're playing will automatically go into picture-in-picture when you go to another app, so you can respond to a message without stopping what you're watching. Apple of course showed this off with AppleTV, but hopefully it will work for Facebook Messenger video calls, Netflix, YouTube, and other third party apps as well.

Currently, when someone on Android is on a Messenger video call, they can go look at another app without pausing the video stream, but on iOS it turns my camera off as soon as I leave Messenger, which is a bit annoying. I'm sure this was intended as a security feature, but it looks like Apple has opted to go for an indicator at the time of the screen showing when the camera is recording instead in iOS 14. To be honest, I'd kind of prefer a small hardwired LED similar to what the Mac has, just to feel totally secure that it couldn't be bypassed, but I know Apple takes privacy and security seriously so I'm sure they've done their best to make sure the on-screen indicator comes on.

Siri - Better UI, unclear whether she'll work any better

One of my favorite quotes from WWDC was probably, "As much as Siri's advanced over the years..." Yeah, it's still crap. Compared to my Google Home, Siri seems completely useless. She misunderstands half the things I say, especially for the most important things like sending a text in the car, and without access to Google's search engine she can't return the level of information in response to a question that Google can. She also can't understand multiple step instructions or follow-up questions.

But, supposedly Siri is going to be smarter now. There weren't really a lot of details on how that will look. What we did get details about are UI changes, audio messages, and a translation app.

The UI is getting improved so that Siri won't take over the entire screen anymore, instead the icon will appear at the bottom to let you know she's listening and the results will appear at the top, with your app still visible in the background. This extends last year's changes for CarPlay, and is a great feature, especially on the iPad. I don't need to see 11 inches of Siri.

Siri seriously is getting a couple of helpful new features, though.

First off, you’ll be able to send audio messages now. You can currently record and send a clip in your text string, but not with Siri. Apple says audio messages will be great if you want to convey the emotion in your voice, but personally I’m thinking this will be nice so I don’t have to fight with Siri in the car anymore trying to get her to text the right thing...

The second thing is a translation app, which should allow for real time conversations between yourself and someone speaking a different language. It’s not a novel idea, but what they do offer over other brands is that Apple’s translation app will work entirely offline and keep your conversations completely private. Apple doesn’t always feel the need to be the first to create something, often they wait a while and then see how they can do it better.

Maps

One area they waited a while and didn’t do any better at all, though, is Maps... Apple’s Maps does offer greater privacy than Google Maps or Waze, so it’s an obvious choice for a privacy conscious person, but Google Maps and Waze both offer better features and directions in my opinion. Nevertheless, Maps is getting some updates with guides to offer local recommendations and cycling maps, both things which Google Maps already has. The cycling directions, however, will only work in some select cities, which is really the biggest problem with Apple Maps as a whole. I'm sure it works great in LA or NYC, but it's not doing much for me in central Ohio.

Messages

Messages is getting a few basic improvements this year: pinned conversations, new Memoji options, threads, and mentions (finally!)

I was hoping for read receipts in group messages, similar to Facebook Messenger, but sadly there was no mention of that. I was also hoping to be able to see who had reacted to messages, but I realized last night that you can long-hold on a message to see that already, so if you also didn’t know, you’re welcome.

CarPlay

When I’m driving my car, which I haven’t been doing much lately, I’m a big CarPlay user. Apple said they were making big improvements, but most of what they mentioned didn’t really have to do with CarPlay itself. They are adding some new wallpaper options, so maybe if they allow custom ones I can put a picture of my car in my car, but that’s not really a useful feature... They’re also going to support apps for additional activities like parking and food ordering, which could potentially be useful.

The primary new feature is that now your phone will be able to act as your car key. You can tap the NFC to unlock your car and start it when the phone is on the charging pad, which Apple claimed is more secure than traditional keys. You can share your keys and add restrictions to certain drivers as well. It certainly seemed to work well on 2021 BMW 5 Series in the demo, but I doubt it’s coming to my 2014 Ford Focus any time soon.

App Clips

To be honest, it was a bit unclear how these work. Essentially, though, App Clips appear to be a smaller version of an app for specific features that you’ll be able to run without the full app installed. You’ll be able to launch them via an NFC tag, QR code, or website, or share them in Messages, apparently. They'll be able to provide services like renting a scooter or bicycle from your phone without needing to get the app.

Calls will no longer take over the entire screen

Apple mentioned this under their iPadOS segment, but I’ll include it here since it applies to both. Phone calls won’t take the entire screen anymore, instead they will appear in a notification at the top of your screen. It’s small but a huge improvement.

iPadOS

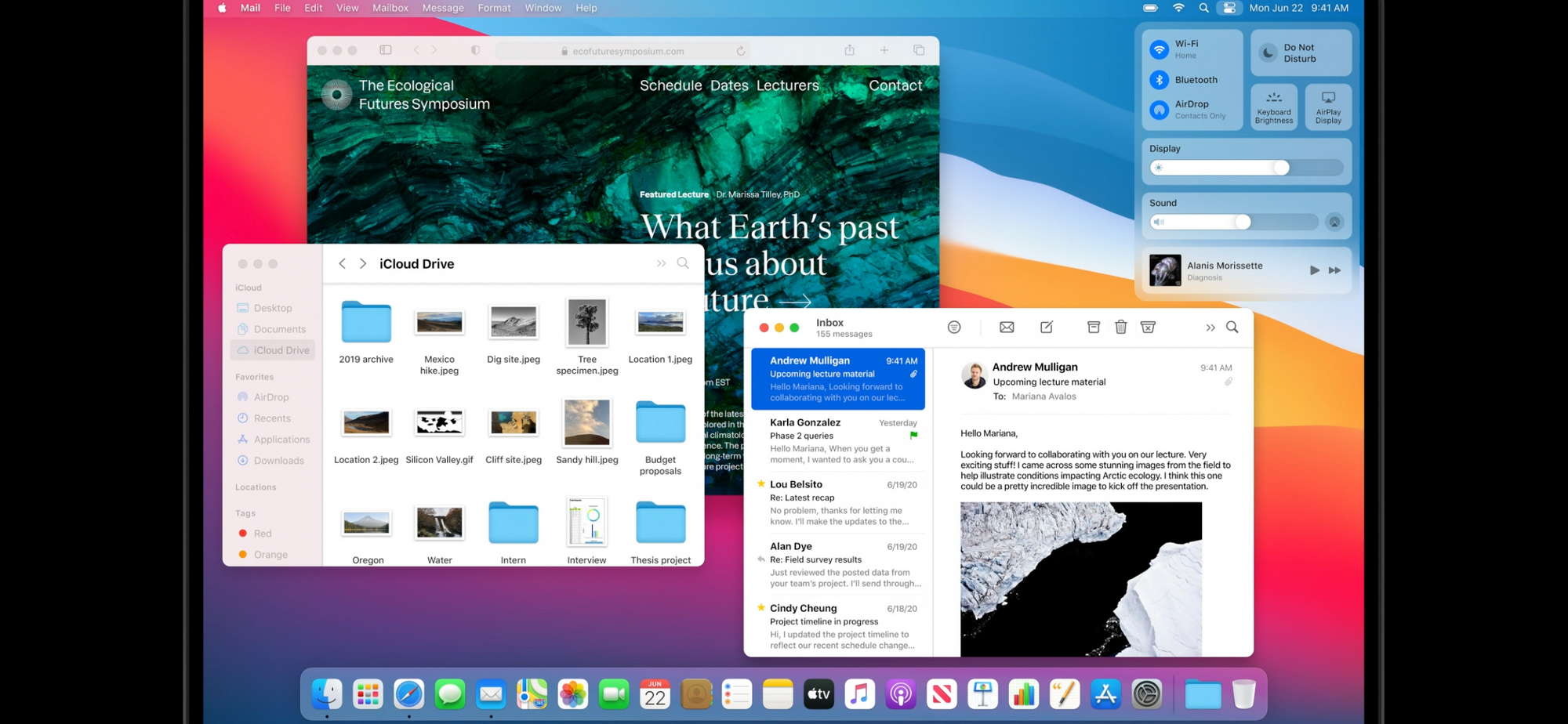

Evidently I took this screenshot at the same time as adjusting the volume lol

iPadOS, of course, remains similar to iOS, so it will be getting many of the same new features, such as widgets on the home screen and the new Siri design.

Sidebars

Some of Apple's built in apps like Photos, Notes, and Files will now have a sidebar to give you some additional power, letting iPadOS diverge a bit more from iOS and start looking slightly more like macOS.

Music

Music is getting some UI improvements, to take better advantage of the large screen and have a full screen player with lyrics. I always use Spotify, though, so this doesn't really affect me.

Search

Currently to perform a search on the iPad you’ll need to go to the home screen and pull down, just like on iPhone, and it takes over the entire screen. The new search will be a small field in the center of the screen, over top of your apps, similar to Spotlight on macOS, and will not only launch apps but also search in apps, give you information, search the web, et cetera.

Given the type of work that’s done on an iPad, compared to a phone, this change makes a lot more sense, and also positions the iPad to be a bit more powerful like a Mac.

Apple Pencil improvements

The handwriting support on the iPad is getting some updates. Not only will notes have better handwriting recognition and support snapping shapes and moving things around, but also all text fields on the iPad will now support handwriting!Apple is calling this complete game changer, “Scribble.” Some Android devices already have similar features, but now on the iPad you will be able to write in a text field and it will be converted to text!

Many times I’ve wished I could just write somewhere, so I’m very excited about the prospect that I’ll no longer have to open the silly on screen keyboard or set my iPad up somewhere to use an actual keyboard, instead while I’m sitting on the bed drawing I’ll be able to just write in a text field.

It remains to be seen whether this will work in third party apps, but I certainly hope that it will out of the box or that third parties will be able to support it.

Air Pods Pro are getting better surround sound

That’s about all Apple had to say, and I don’t own Air Pods, although I’ve considered getting some.

Apple Watch

I don’t have an Apple Watch either, because I don’t have infinite money, but it’s getting a few new features so I’ll describe them here. Just be aware that I’m not familiar with the watch...

The Apple Watch is getting some new watch face configurations and the ability to share them.

It will also now be supporting dance as a workout type. I don’t really dance, but I do appreciate the effort that has gone into designing a system inside the watch to detect when you are dancing and how exactly your entire body is moving from your wrist.

The Apple Watch could already be used to track your sleep with third party apps, but Apple is now introducing their own build-in sleep tracking. It will help you set goals for getting to bed on time, and rules on your phone will help you enforce them, and of course you’ll get sleep tracking and improved trends in the Health app.

In a move designed specifically for the problems of our modern world, the watch will now also detect when you start washing your hands, using the gyroscopes and microphones, and will then start a countdown to ensure that you wash your hands for a full 20 seconds. It will also chime if you try to stop early. No more cheating on the hand washing!

Privacy - A fundamental human right

Apple restated their belief that privacy is a fundamental human right, and backed it up with the announcements of a new set of features for managing your privacy across their devices. Developers will now let you upgrade your existing account to use Sign In with Apple, which feels more secure, although I don’t know the details. You’ll now be able to share your approximate location with an app, as well, which is great for things like weather apps which need to know what town you’re in but don’t need your exact address. There will be more visibility for camera and microphone use, with an indicator at the top of the screen to ensure you know when someone is watching or listening. Apps will now get the same cross-site tracking protection that Safari has, with requirements for them to request permission from the user before tracking you across other apps or websites. And, in the App Store, you’ll be able to see the highlights of an app’s privacy information before downloading it so you can be sure it’s something you’re comfortable with.

Privacy has been a major focus for Apple in the last few years, and has made them distinct from other data hungry companies. Apple products cost more than the competition, but the extra price buys you back the right to your data. Google, for example, can afford to sell phones for less because they’ll profit off the information they’ll acquire from their users, while Apple charges a bit more and puts your privacy first.

Home

Apple has tried for years now to get into the smart home field, and frankly haven’t done that well. The limitations of Siri and high cost of their smart speakers have ensured that most people will buy from Google or Amazon instead, even at the cost of privacy. The downside of this has been that may internet of things manufacturers have opted not to support Apple HomeKit at all, making it more difficult to interact with their products from your iPhone or other Apple device.

I would like to buy some smart plugs and/or bulbs for my room, because I’m particular about the lighting and it’s tedious having to walk around and turn several lights off when I leave (first world problems, I know.) But, unless I purchase more expensive devices, I would only be able to interact with them natively from my Google Home, not my iPhone or iPad.

Apple announced that they have made HomeKit open source, and partnered with Amazon and Google to make a standard for internet of things devices, which I can only hope means that in the future all devices will support all three ecosystems. If that’s the case, it should be much easier to buy smart things in the future without having to fret over whether it will connect to everything the way you want it to.

Apple has partnered with these brands in an effort to improve their Home capabilities.

Additionally, they have also announced native adaptive lighting, to adjust the color of your lights throughout the day, and their own built in security camera interface which is more privacy conscious than some of their competitors. Assuming you have a HomePod and Apple TV (i.e. you’re a rich Apple fan) your Home Pod can now announce who’s at your door while you’re Apple TV displays the video of them, automatically. I will say that’s pretty cool. It’s almost worth buying a HomePod and Apple TV, just for the cool factor. This is the kind of world I want to live in: one with tons of cool factor even though it serves literally no practical purpose.

macOS Big Sur

Okay, Apple, I know that’s a coastline in California and fits your current naming convention, but really? It’s sounds like you’re saying, “big sir.” For as big a deal as this OS is, it deserves a better name.

All that aside, Big Sur is bring some major design changes to the Mac. The interface will start to resemble the iPad a bit more, with more subtle buttons which highlight as you mouse over them, more rounding, flattened window headers, a translucent menu bar, and a floating dock. I genuinely like the iPad better than the Mac at this point, so it was certainly time for a refresh. It’s 2020, and Big Sur makes the Mac feel sleek and modern, the way a Mac should.

Adding to the moves to make the Mac a bit more like the iPad, Apple has chosen to add the control center to macOS. You’ll be able to drop anything you want from it into the menu bar, but you’ll also be able to see all the controls in a nice panel. They’ve also re-designed the Notification Center, combining it with your widgets, which will look like they do on iOS, and grouping notifications just as the iOS does.

There’s several changes throughout the Mac’s apps as well:

Messages

Messages is getting the same Memoji, pinned conversations, and so forth, just like iOS.

Maps

I don’t know who uses Maps on the computer, but it’s getting the same features iOS is.

Catalyst

This is Apple's system for developers to be able to build an app that works across multiple devices. In theory one codebase will be able to run on iPhone, iPad, and Mac. Catalyst was introduced a year ago, but was apparently somewhat difficult to sue. Hopefully these new updates mean it’ll be a bit easier to develop with.

Safari

I know a lot of people prefer to use Chrome, but I still use Safari myself. I would say it’s already quite fast, but Apple claims it is getting even faster now. Safari is a solid choice for maintaining basic privacy online, and now it will show you a privacy report for each website to give you awareness of how it is tracking you, as well as preventing them from doing so. It will also monitor for security breaches on websites you have saved passwords from to alert you if they may have been compromised.

The biggest new thing, however, is that we’ll getting extensions which can be brought over from other browsers, as well as a way to browse extensions in the App Store. I understand Apple was trying to make a move for better security a couple years ago when they essentially took extensions away, but it will be nice to finally have them back again. Back in the day extensions could be made specifically for Safari and were available from a Safari extensions website. Then they moved to the current architecture with extensions on the App Store that have to be built differently. This broke our old extensions, most people didn’t make new ones, and what there are are difficult to find. Going forward not only will extension be in a specific section of the App Store, but we’ll also be able to use extensions from other browsers. From what I’ve heard online, you can now install Chrome extensions in Safari. That’s a big swipe at Chrome and a huge improvement to Safari. We’ll also get privacy protections around our extensions, so they can only access certain websites and only for the amount of time we allow.

Some other smaller UI changes are being made: you can customize the start page more, tabs will have previews when you over over them, and there will be inline translation that can happen automatically and immediately. Helpful when you forget to turn off your foreign VPN.

Mac moving to Apple CPUs

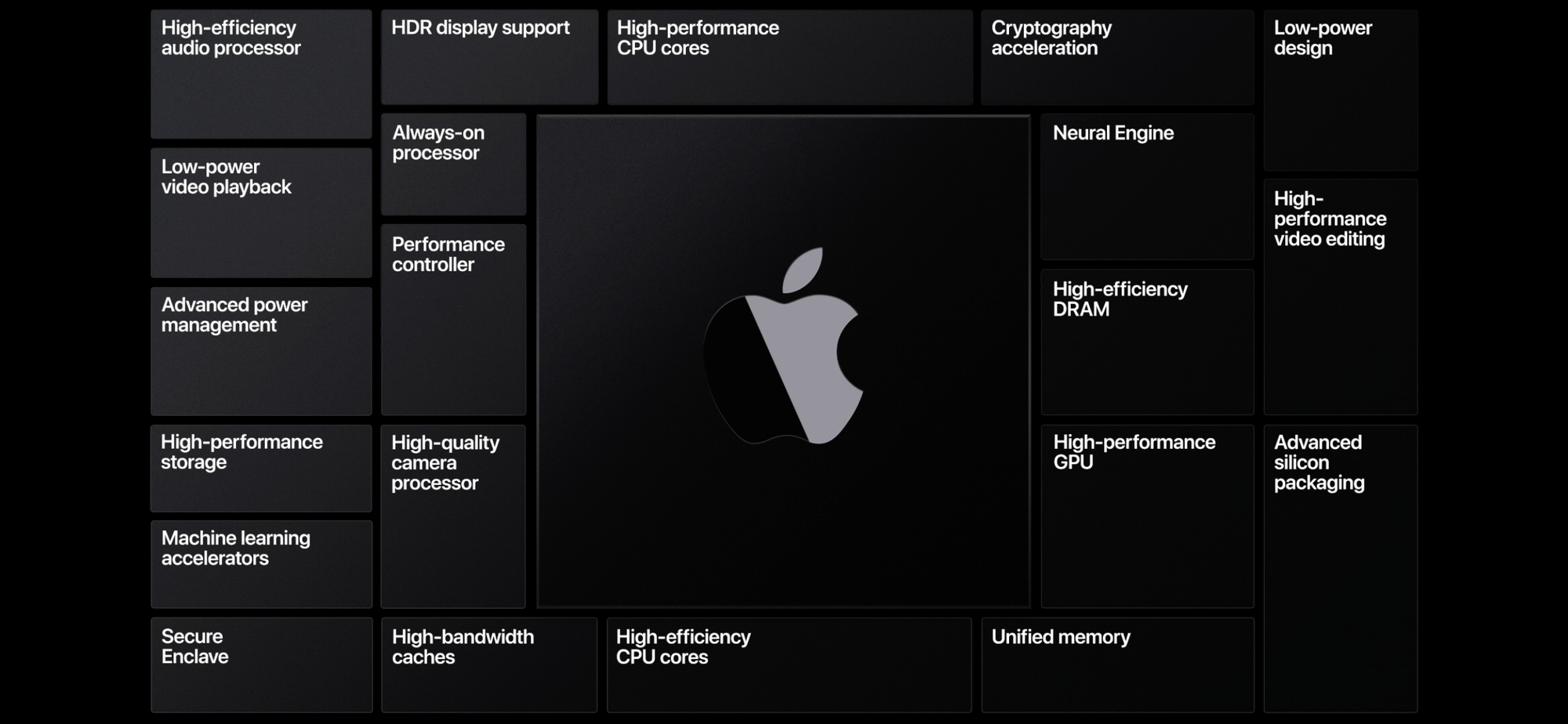

These are the elements Apple intents to include in their new processors

Yesterday was a very historic day for Apple, and one that I’ve been looking forward to for a couple of years since the very first rumors started.

Now it’s official.

Apple will start putting their own processors in their computers.

Apple entered the chip business in order to get better silicon into the iPhone, because nobody else had what they needed, and they still make the best mobile chips in the world. When they made the iPad they needed to build their own processors to support the Retina display, and have increased their speed, they claim, by 1000x in the last 10 years. I have an iPad Pro, and I can confirm, it’s very fast. At this point my iPhone XS and 2018 iPad Pro both feel faster than my 2016 MacBook Pro with its i5. Using laptops makes me sad now.

To date Apple has been putting 7nm chips in their mobile devices, and there is strong evidence to suggest that the iPhone 12 will include a 5nm chip. By comparison, the latest Intel chips are 10 nm. This means that Apple's next chips will have transistors half the size of Intel’s, so more can be put in the same amount of space, resulting in faster processors. Additionally, by making their own chips Apple can design them exactly for the needs of their software.

Going forward, Apple will be able to make custom hardware for their software, to maximize performance and battery life, and integrate a Secure Enclave, custom GPU, neural engines, image processing engines, and so on. Additionally, this move will change the Mac over to the same CPU architecture as the iPhone and iPad, which should be very handy in the push for apps to work across all three. In fact, iPhone and iPad apps will immediately be able to run natively on the Mac as well, inside of a window. The interface will of course look the same as it does on the mobile device, and I’m not sure it’s something I see getting used a whole lot, but sometimes you do things just because you can. Once developers make the changes they need, however, this will enable them to use the same logic with different front-ends to build an application that looks great on iPhone, iPad, and Mac.

What about my old apps?

One concern about the move from Intel's x86 processors to Apple's ARM ones would be backwards compatibility, since a program compiled for the x86 instruction set will not run on ARM. Fortunately for us this is not the first time Apple has completely changed their processor architecture—in 2006 Apple switched from PowerPC to Intel. I've actually been a Mac user just long enough to remember that, because my parents purchased an Intel MacBook the next year. I actually remember occasionally downloading an old PowerPC app and using Rosetta to translate it for the new Mac, and I don't recall having any issues. If 11 year old me somehow knew how to do that, I assume the current transition will work as well.

Apple is introducing a new translation software, which they've creatively named Rosetta 2. Any app that the developers don't get translated for the new chips right away will be able to be translated with Rosetta 2. This is reassuring, given that when Apple took away support for 32 bit applications last year in macOS 10.15 some things just stopped working. I know there are some apps I use which developers won't get around to right away. Apple claims the transition for developers will be quick and easy, but, as a developer, everything always sounds quick and easy but ends up taking forever and being a pain.

2007 MacBook

As an aside, that 2007 MacBook was really something. With shiny plastic and a glowing apple on the back, it weighed in at a mere 5.2 pounds, which was practically infinitely less than the CRT monitor and heavy tower it was replacing. It sported 1GB of RAM, a 2.0GHz processor, and a 13 inch LCD screen with just over half the resolution of today's iPhones. And, you could carry it around the house! If we'd seen a modern iPad back then we'd have probably fainted.

How fast are they?

Obviously we'll have to wait until reviewers start getting their hands on them to really get an idea, because it's always hard to tell from Apple's demos how the computer will handle in the real world. In the mean time, though, here's what we do know.

The computer in all the demos throughout the presentation was running macOS 11 Big Sur, obviously, the latest iPad chip, the A12Z, and 16GB of RAM, as seen briefly in the video. Everything was looking very fast right off the bat, but it had Apple's $6,000 Pro Display XDR monitor attached to it, so I assumed it was probably a specced out Mac Pro that would run the demos much faster than anything most of us would be able to afford. In fact, though, Apple claims the whole thing was actually run on their Mac Mini developer units, with the specs I've just listed.

Everything in the demos looked very fast. Again, it was a demo, and everything always looks good in demos. Probably only one app was running at a time, the environment as completely perfect, and Apple could follow a path they knew worked or re-film as often as needed. That being said, 16GB isn't a lot in today's RAM hungry world, and the A12Z, while it does have 8 cores, also has a modest 2.49 GHz clock speed. These numbers, however, highlight Apple's biggest strength: optimization. While other manufacturers are building operating systems to run on many different hardware sets, Apple can completely focus their efforts on optimizing for a select few. This means that they get more performance from products with lower specifications. Building their chips in-house also means that they have introduced many specialized elements in the chip to do exactly what they need. Add to that that 8 CPU and 8 GPU cores isn't anything to scoff at, and you've got yourself a potentially very powerful machine.

Apple demonstrated a game downloaded straight from the App Store and translated for the new Macs, and everything seemed to be running quite quickly. Of course we didn't get to see how hot the machine got, how loud the fans were, or whether you could realistically run Discord at the same time, but I'm going to guess from my iPad with the older A12X and 4GB of RAM that we can expect Apple's new machines to perform very well.

Once these machines get out into the world I'm sure we'll see some GeekBench scores to help us compare them to other computers on the market.

macOS 11?

If you caught it above, yes, the next macOS version will be version 11, rather than version 10.16, which follows the convention of incrementing the largest version number when you make a large change that is not backwards compatible. Like, you know, changing the whole instruction set it runs on.

So, when can I get one?

That's a good question. Developers can get their hands on a development unit immediately, and Apple says they plan to ship the first Macs with the new chipset by the end of the year, but they also said there will be a two year transition period and they will still be releasing new Intel based Macs as well. What we don't know yet is which product lines will get the new chips first or how much they will cost. I do assume that laptops will be first in the lineup and the MacPro will be last, since Apple isn't positioned to release a MacPro scale chip yet as far as I know.

What about my old Mac?

The good news for anyone not wanting to upgrade is that Apple is planning to support Intel Macs for several years. macOS Big Sur and likely future operating systems will be available for your Intel machine, so you won't have to replace that $50,000 MacPro a year after you got it. Or the 2013 MacBook Pro you're still nursing.

Wait, did he just say Linux VMs??

In a surprise move thrown into the keynote along with their announcement about new chips, Apple announced that they'll be adding their own virtualization software to macOS to run Linux or Windows virtual machines. This may be necessary since the move to ARM could make it harder to get operating systems to dual boot and break existing virtualization software. Given the fact that current VM software is frequently either expensive or garbage, however, this is a welcome change. We didn't get to see a lot of details on this, because the keynote really is focused more on the public than developers, even though it's a developer conference, but I have a good feeling about it.

A health and safety message

Apple did a good job of making us forget they had produced this in the midst of a pandemic, but at the end we were reminded by a scrolling message explaining the precautions they had taken to make the film. Back to reality.

What we didn't get.

Default apps. Apple made no mention of default apps in the keynote, a feature many people were hoping for which would allow you to select to always open webpages in Chrome or play songs on Spotify. According to The Verge, we will be able to select default mail and browser apps, which is a start. But I was really hoping to be able to stop telling Siri to play a song "on Spotify" every time.

Why are iOS 14 default apps limited to just browser and email apps? - The Verge

Two few things not in the keynote

There were two noteworthy features not mentioned in the keynote, which I have seen online since.

First, iOS is now rumored to let you zoom in farther on your photos. Enough said.

Second, you can now tap the back of your iPhone, assuming it's relatively recent, to run a shortcut or some pre-defined actions, like summoning Siri. You'll be able to set up a double-tap and triple-tap. Personally, I want a double-tap to open my camera. The faster I can open the camera the better.

All images in this article with the exception of the 2007 MacBook Pro are screenshots of Apple's WWDC Keynote. They are used under fair use for editorial purposes. Fair Use Notice